The Problem#

According to Dyslexia International, at least 1 in 10 people are affected by dyslexia, i.e. more than 700 million children and adults worldwide.

Dyslexia has three major forms: reading, writing, and spelling. This project decided to tackle reading as it seemed like the most feasible intervention through technology.

Children rely on special educators.

- Children who are diagnosed early on are placed in tutoring classes to supplement their schooling.

- Research has shown the Orton-Gillingham approach to be very effective. However, these solutions tend to be generally expensive across the world.

Adults use web-based accessibility tools.

- The 18+ population relies on tools and utilities that help in making reading easier. This is usually through Chrome extensions and website plugins.

- One well known plugin is Agastya by Oswald Labs. The gap here is that these solutions only work on websites, and not in the real physical world.

How might we help individuals with dyslexia read books and other textual information better at home and on the go?

Overview#

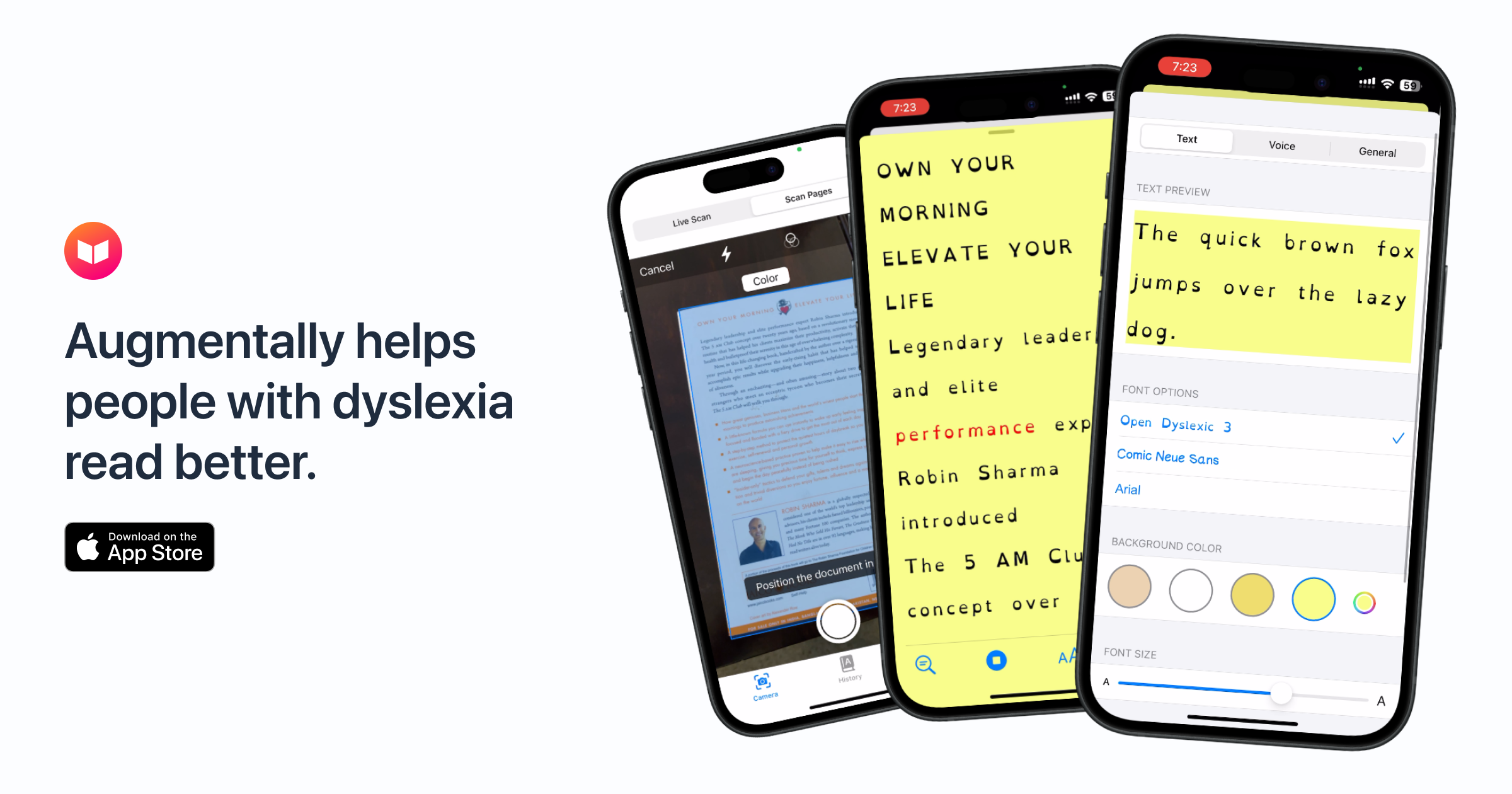

Augmenta11y is a culmination of a two year long iterative design process. Here is a recent demo video that highlights the problem being solved and how the app solves it.

- We observed a 21% improvement in reading times while children use Augmenta11y.

- I developed a cross-platform app that works on iPhones, iPads, and Android devices.

- Augmenta11y has over 2,000+ downloads worldwide and has been featured on many news publications such as The History Channel, Times of India, and more.

User Research#

Through an extensive literature review and stakeholder interviews, we narrowed down the factors affecting readability for a person with dyslexia to elements such as: special fonts, increased letter spacings, line heights, and contrasting background colors.

Research Insights

Children will benefit the most from this.

There are two main audiences for this solution. The primary audience is children aged 8-14 who are currently struggling to read, and might be in a tutoring program. The secondary audience is adults with dyslexia who could use this as the occasional utility.

There is no universal solution to improving readability.

Everyone has different preferences for what helps them read better. The app should provide options during the onboarding to let users pick their ideal colors, fonts, and spacings.

Lack of dyslexia-specific solutions currently.

Most apps used by people with dyslexia as of then were not designed for them specifically. This leads to a lack of personalization and a feeling of compromise when being used.

Design#

The Implications

Animations, animations, and more animations.

Since we identified children to be the primary audience, the use of animations and micro-interactions become more important than ever. Children have short attention spans, and a lot of children with dyslexia are also diagnosed with ADHD, meaning that keeping them on task would be a challenge.

Personalization is king.

Since there is no universal setting for better readability, the app needs to allow users to change their typography preferences quickly and often. This is why there is a personalization section in the onboarding, and it exists on the bottom navigation through out the app's lifecycle.

Design Iterations#

The app has gone through 3 iterations of design and development cycles, with the most recent one being the biggest update to its visual design and suite of features. The images below show the progress and design maturity that the app has gotten over the years.

Testing and Evaluation#

The app has undergone 2 rounds of usability testing. For the first round in 2019, we tied up with Disha Counselling Center in Mumbai, India. The test subjects were a group of 39 students aged 8–14 years. The students were provided with two passages appropriate for each age group. The task given was to read one paragraph normally, and the other using the app. To ensure one paragraph was not inherently easier to read than the other, we alternated between the paragraph read using the app. The time taken by the students to read each passage, and their text styling preference recorded.

In December 2020, we partnered up with the Dyslexia Institute of Indiana as I made Augmenta11y a formal research project at Georgia Tech. I conducted 10 1-hour usability sessions on the new version of the app and am currently in the process of analyzing the test data and writing a full-journal paper on this research.

Solution#

An animated and personalized onboarding.

- Right from the very first screen, the app uses animations to help children understand what the app does.

- To reduce cognitive load, it asks them to select their preferred fonts, colors, spacings, voice accent, and playback speed – following the one task per screen philosophy.

- All the fonts and background colors options are backed by research and known to improve readability. The app also has 14 different read aloud voices and an option to try different playback speeds.

Snap, pinch, zoom, and crop.

- Users can snap a picture of the book they want to read, and use smart cropping options such as zoom, pinch, and bounding boxes to focus on specific parts of the image.

- There are helpful labels along the way to guide their actions. Once they have picked the appropriate bounding box, the app uses Optical Character Recognition to parse the image and display it in the font and color settings chosen by the user.

Reading word by word while keeping track of progress.

- The reader mode allows children to read their books in a dyslexia-friendly way through their chosen fonts, colors, and spacings. This is supplemented by a read-aloud mode that reads the text for them in the voice and playback speed they have chosen.

- Users can pause, start again, and keep track of how much of the reading is left through a progress bar. All of these settings can be changed in real time. The app also has a History mode that saves all textual information scanned by the user.

Outcomes#

We saw a 21.2% reduction in the amount of time taken to read text using Augmenta11y. It was also observed that 85.7% of the students found the OpenDyslexic font helpful and 76.9% of them preferred to have a yellow background to the text.

We also presented a poster at India HCI 2018 – an ACM SIGCHI conference and will be publishing this research as a paper at the 9th International Conference on Computer Communication and Informatics (ICCCI 2019) in the HCI track.

Some Milestones#

- October 2018: First beta/proof of concept of Augmenta11y is launched!

- March 2019: After six months in the beta – Augmenta11y launched worldwide officially!

- May 2019: Augmenta11y starts getting featured in the press & media – The History Channel, Times of India, YourStory et cetera.

- December 2019: Augmenta11y crosses 2,000+ downloads on the iOS App Store and Google Play Store!

- October 2020: Augmenta11y got a brand new re-design from the ground up with many new features and is in beta.

- January 2021: I'm working hard on getting the new version of the app out of beta while publishing a new research paper on it.

Download the latest beta on iOS here. The old v1 can be found here for Android & iOS devices.

Learnings

- Building empathy for a user population I did not know a lot about has helped me grow immensely as a designer.

- During my observation sessions initially, and conducting many usability sessions with these children has made me realize how important real-world context is, and how much more you can learn about your user by being with them instead of reading about them.

- Since I designed and developed this app, my knowledge of feasibility and technical contraints improved drastically, and I was able to set realistic product milestones.

Next Steps

- My main priority is to iron out all of the beta bugs and get the v2 app on the App Store and the Google Play store immediately.

- Some long term milestones are to establish partnerships with more special education institutions to generate more awareness regarding the app and its low-cost benefits as compared to other solutions

- Get more media coverage on the app's many new features, and continue getting feedback from our target users to make the app more friendly towards children.